Granite-4.0-1b-speech

Model Summary: Granite-4.0-1b-speech is a compact and efficient speech-language model, specifically designed for multilingual automatic speech recognition (ASR) and bidirectional automatic speech translation (AST).

The model was trained on a collection of public corpora comprising of diverse datasets for ASR and AST as well as synthetic datasets tailored to support Japanese ASR, keyword-biased ASR and speech translation. Granite-4.0-1b-speech was trained by modality aligning granite-4.0-1b-base to speech on publicly available open source corpora containing audio inputs and text targets. Compared to granite-speech-3.3-2b and granite-speech-3.3-8b, this model has the following additional capabilities and improvements:

- Supports multilingual speech inputs in English, French, German, Spanish, Portuguese and Japanese,

- Provides higher transcription accuracy for English ASR and faster inference through better encoder training and speculative decoding,

- Has half the number of parameters of granite-speech-3.3-2b for running on resource-constrained devices,

- Adds keyword list biasing capability for enhanced name and acronym recognition

Evaluations:

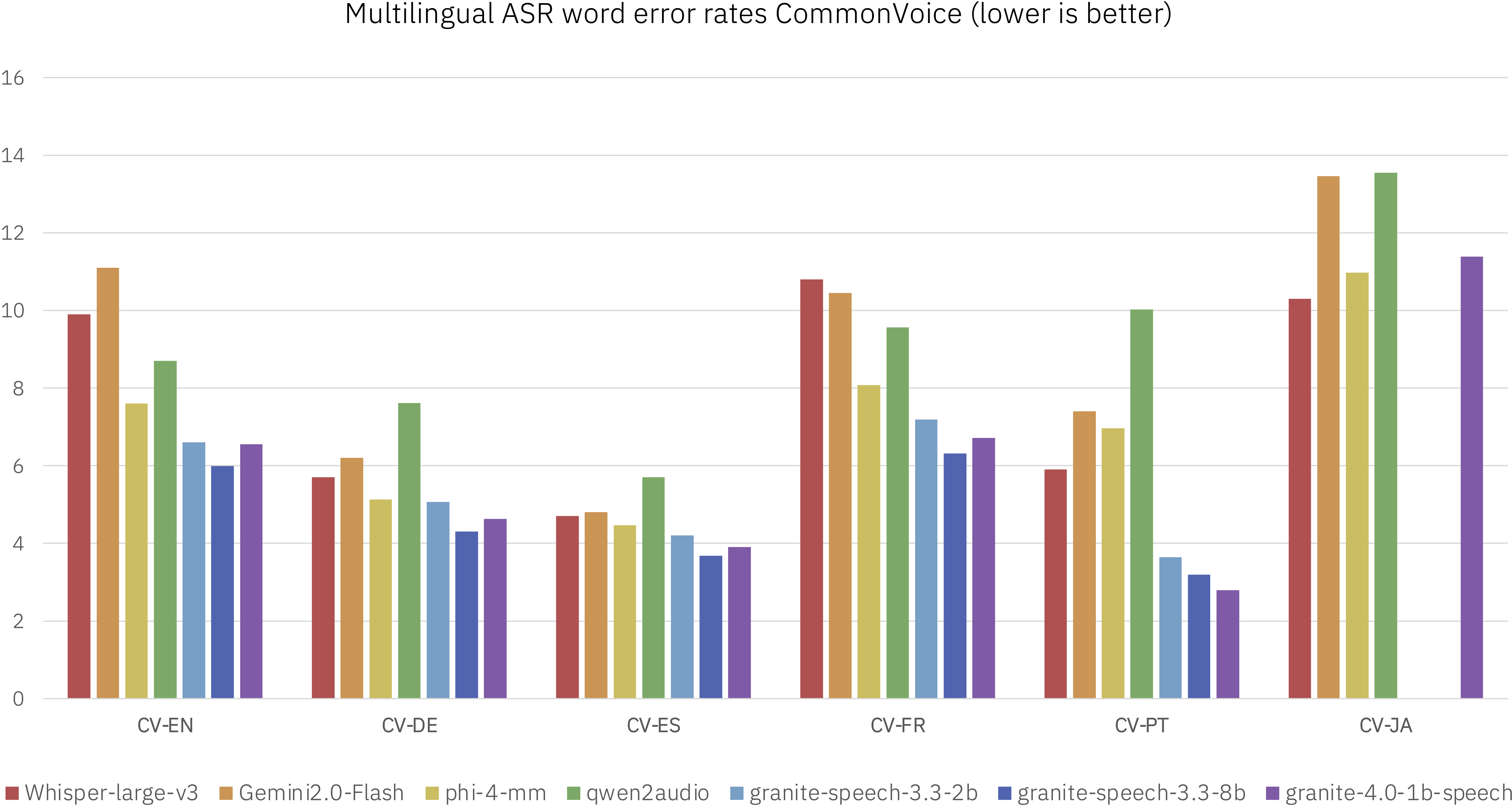

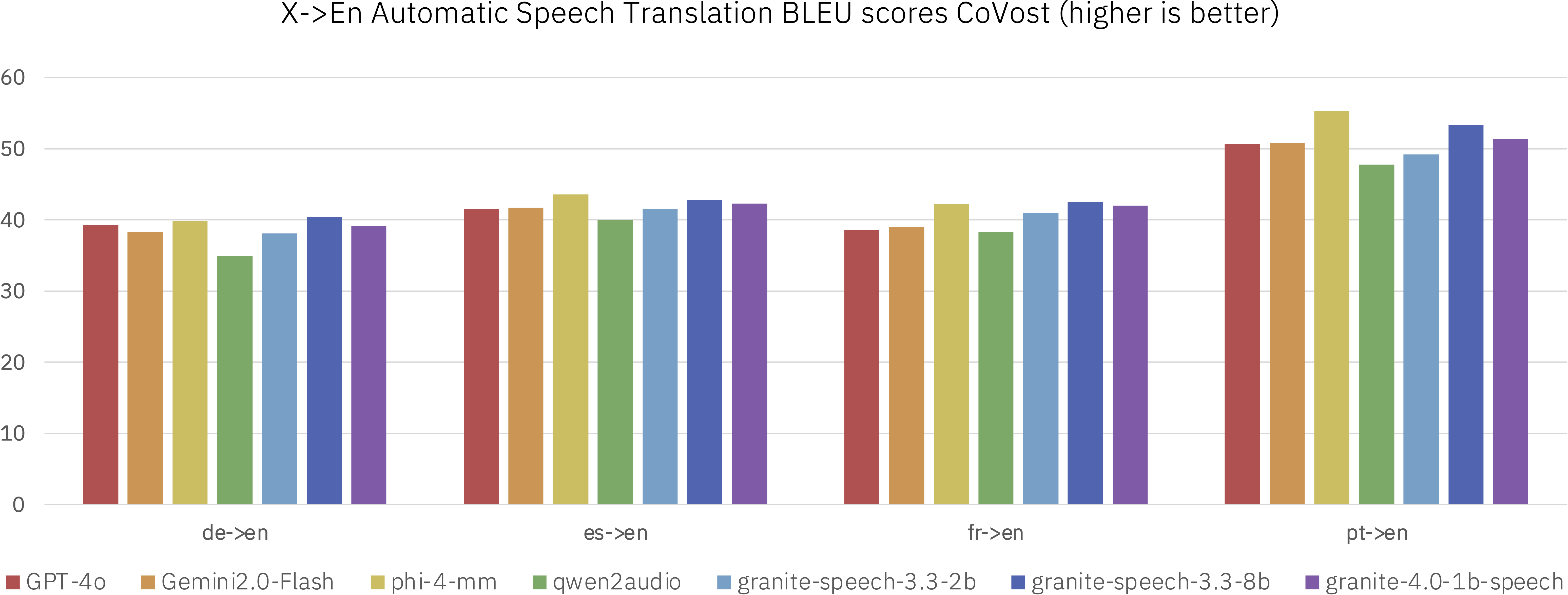

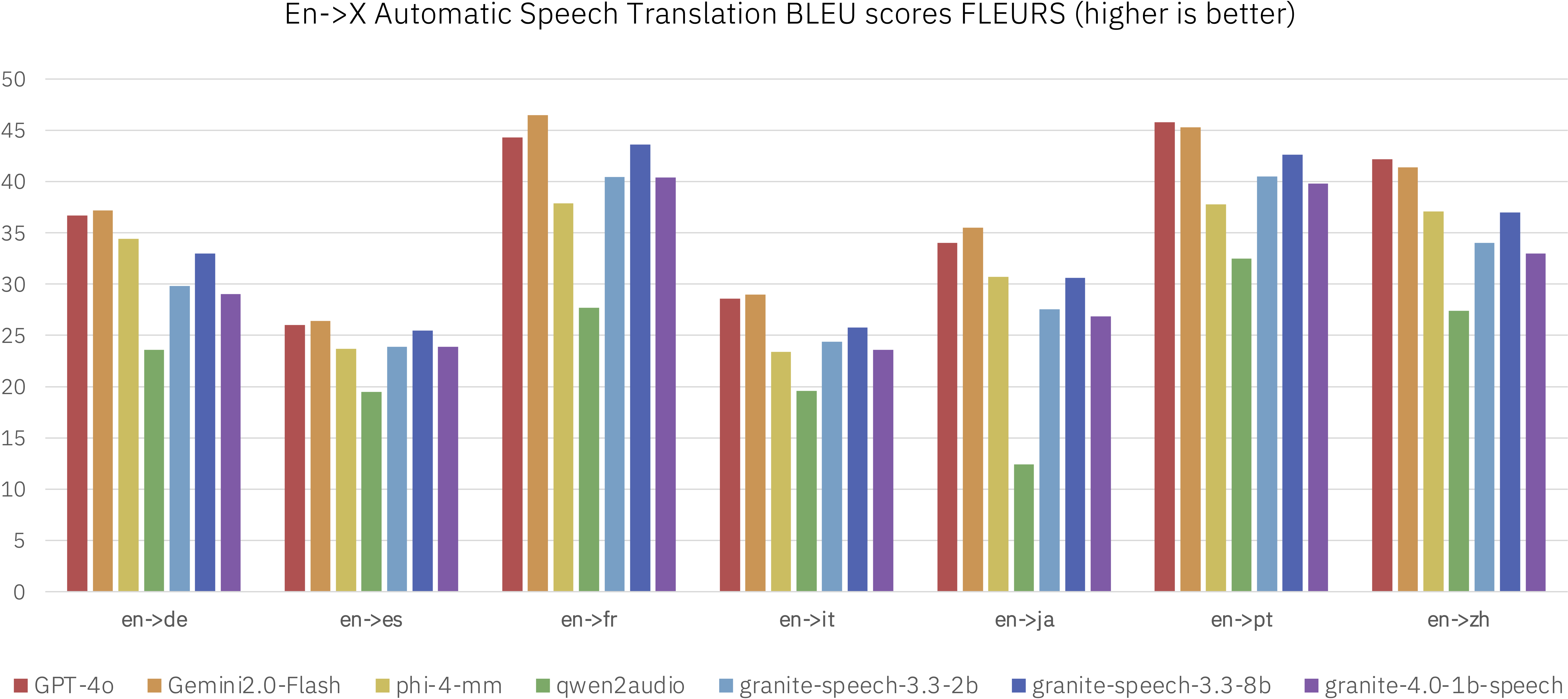

We evaluated granite-4.0-1b-speech alongside other speech-language models in the less than 8b parameter range as well as dedicated ASR and AST systems on standard benchmarks. The evaluation spanned multiple public benchmarks, with particular emphasis on English ASR tasks while also including multilingual ASR and AST for X-En and En-X translations.

Performance on HuggingFace Open ASR leaderboard:

| model | Average WER | RTFx | AMI | Earnings22 | Gigaspeech | LS Clean | LS Other | SPGISpeech | Tedlium | Voxpopuli |

|---|---|---|---|---|---|---|---|---|---|---|

| ibm-granite/granite-4.0-1b-speech | 5.52 | 280.02 | 8.44 | 8.48 | 10.14 | 1.42 | 2.85 | 3.89 | 3.1 | 5.84 |

Release Date: March 6, 2026

License: Apache 2.0

Supported Languages: English, French, German, Spanish, Portuguese, Japanese

Intended Use: The model is intended to be used in enterprise applications that involve processing of speech inputs. In particular, the model is well-suited for English, French, German, Spanish, Portuguese and Japanese speech-to-text and speech translations to and from English for the same languages, plus English-to-Italian and English-to-Mandarin.

Model Architecture:

The architecture of granite-4.0-1b-speech consists of the following components:

(1) Speech encoder: 16 conformer blocks trained with Connectionist Temporal Classification (CTC) on character-level targets on the subset containing only ASR corpora (see configuration below). The character vocabulary consists of the first 256 ASCII entries for the European languages plus a 92 phonetic Katakana character set for Japanese. In addition, our CTC encoder uses block-attention with 4-seconds audio blocks and self-conditioned CTC from the middle layer.

| Configuration parameter | Value |

|---|---|

| Input dimension | 160 (80 logmels x 2) |

| Nb. of layers | 16 |

| Hidden dimension | 1024 |

| Nb. of attention heads | 8 |

| Attention head size | 128 |

| Convolution kernel size | 15 |

| Output dimension | 348 |

(2) Speech projector and temporal downsampler (speech-text modality adapter): we use a 2-layer window query transformer (q-former) operating on blocks of 15 1024-dimensional acoustic embeddings coming out of the last conformer block of the speech encoder that get downsampled by a factor of 5 using 3 trainable queries per block and per layer. The total temporal downsampling factor is 10 (2x from the encoder and 5x from the projector) resulting in a 10Hz acoustic embeddings rate for the LLM. The projector and LLM LoRA adapters were trained jointly on all the corpora mentioned under Training Data.

(3) Large language model: granite-4.0-1b-base with 128k context length (https://huggingface.co/ibm-granite/granite-4.0-1b-base) finetuned on all the corpora mentioned under Training Data.

Training Data:

Overall, our training data is largely comprised of two key sources: (1) publicly available datasets (2) Synthetic data created from publicly available datasets specifically targeting Japanese ASR, keyword list-prompted ASR and the speech translation task. A detailed description of the training datasets can be found in the table below:

Infrastructure: We train Granite Speech using IBM's super computing cluster, Blue Vela, which is outfitted with NVIDIA H100 GPUs. This cluster provides a scalable and efficient infrastructure for training our models over thousands of GPUs. The training of this particular model was completed in 30 days (26 encoder + 4 projector) on 8 H100 GPUs.

Ethical Considerations and Limitations:

The use of Large Speech and Language Models can trigger certain risks and ethical considerations. Although our alignment processes include safety considerations, the model may in some cases produce inaccurate, biased, offensive or unwanted responses to user prompts. Additionally, whether smaller models may exhibit increased susceptibility to hallucination in generation scenarios due to their reduced sizes, which could limit their ability to generate coherent and contextually accurate responses, remains uncertain. This aspect is currently an active area of research, and we anticipate more rigorous exploration, comprehension, and mitigations in this domain.

IBM recommends using this model for automatic speech recognition and translation tasks. The model's design improves safety by limiting how audio inputs can influence the system. If an unfamiliar or malformed prompt is received, the model simply ignores it and performs transcription which is the default fallback mode. This minimizes the risk of adversarial inputs, unlike integrated models that directly interpret audio and may be more exposed to such attacks. Note that more general speech tasks may pose higher inherent risks of triggering unwanted outputs.

To enhance safety, we recommend using granite-4.0-1b-speech alongside Granite Guardian. Granite Guardian is a fine-tuned instruct model designed to detect and flag risks in prompts and responses across key dimensions outlined in the IBM AI Risk Atlas.

Resources

- 📄 Read the technical report: https://arxiv.org/abs/2505.08699

- 🔧 Notebook: Finetune on custom data

- ⭐️ Learn about the latest updates with Granite: https://www.ibm.com/granite

- 🚀 Get started with tutorials, best practices, and prompt engineering advice: https://www.ibm.com/granite/docs/

- 💡 Learn about the latest Granite learning resources: https://ibm.biz/granite-learning-resources

- Downloads last month

- 4,285

Model tree for onnx-community/granite-4.0-1b-speech-ONNX

Base model

ibm-granite/granite-4.0-1b-base